SEMINARS & PRESENTATIONS

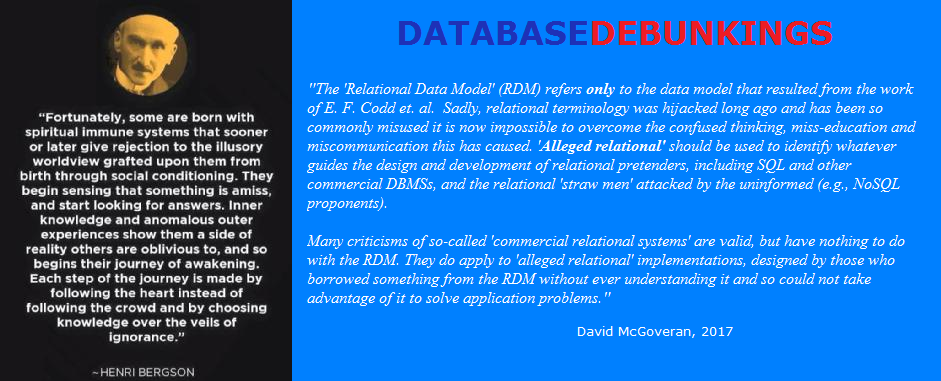

For the last several years my work has been grounded in David McGoveran's new and forthcoming re-interpretation, extension and formalization of Codd's RDM -- LOGIC FOR SERIOUS DATABASE FOLKS (early draft chapters). It is significantly distinct from the common understanding in the industry (such as it is), grounded in the Date and Darwen's interpretation.

The various seminars and presentations we have offered since 2000s reflected the latter interpretation and, consequently, must be revised to reflect the former.

Please contact us if you are interested in on-site custom seminars or presentations.

No comments:

Post a Comment